Everyone wants ‘agentic strategy.’ Few have the visibility to build it.

There’s a massive gap between wanting an agentic strategy and actually having one. At recent industry events, I’ve heard it described as a competence issue dressed up as a strategy problem. Everyone’s talking about it. Few understand it. Leaders want to know how AI traffic will reshape their businesses, what their competitors are doing and whether they’re already behind. But when I press on specifics, like which agents are active on their properties and what those agents are doing there, almost nobody has answers.

There’s a fundamental reason for that: You can’t build strategy around something you can’t see clearly. Most organizations don’t know what AI-driven traffic is actually doing on their properties. They’re reacting to effects, not causes. And without that visibility, every response is a guess.

Pressure, complexity and rash decisions

The pressure is real. Google search referrals to publishers fell 34% year over year. Businesses watching that kind of decline are looking for someone to blame, and AI scrapers are an obvious target. Some have started blocking broadly. People Inc., for example, announced at IAB ALM that it began blocking most bots on some of its sites by default. It’s a logical response to traffic loss, but it’s also a blunt one.

The problem is that AI-driven traffic isn’t monolithic. Data from Human’s 2026 State of AI Traffic & Cyberthreat Benchmark Report, based on more than one quadrillion interactions observed in 2025, shows how fast the composition is shifting: In January 2025, training crawlers accounted for 90% of AI-driven traffic. By December, their share had dropped to 74% as requests from real-time scrapers and agentic AI grew rapidly. Agentic AI traffic alone grew 7,851% year over year. More than 95% of all AI-driven traffic concentrated in just three industries: retail and e-commerce, streaming and media (including publishers), and travel and hospitality.

For organizations in those verticals, this is a fundamental shift in how traffic and discovery work, and it’s changing faster than most teams can track.

The false binary

The instinct to block broadly is understandable. But it’s built on a false choice. For publishers, blocking all AI traffic means losing potential referrals and revenue opportunities, along with the scraping. For retailers, it means turning away legitimate shopping agents along with the fraud. For brands, it’s not just blocking scraping; it’s becoming invisible to AI-driven discovery and recommendation channels.

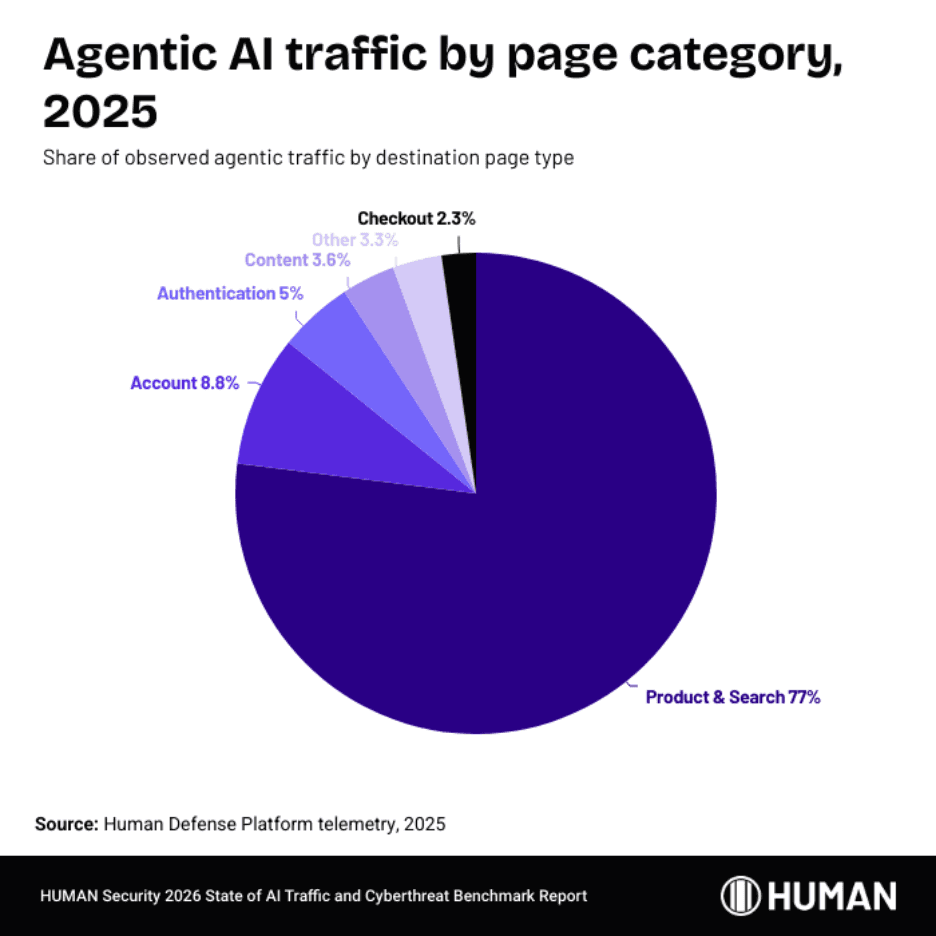

In 2025, 77% of observed agentic activity landed on product and search pages. But nearly 9% hit account pages, 5% landed on authentication flows and 2.3% reached checkout. Agents aren’t just browsing. They’re logging into accounts, managing sessions and completing purchases autonomously. And this is early. As consumers grow more comfortable with delegating purchases and bookings to agents, the volumes will compound.

You can’t build strategy on a binary choice when the traffic is this diverse and the behavior this complex.

From ‘bot or not’ to ‘trust or not’

There is a third path: visibility-led control. You gain leverage when you can see which agents are accessing your systems, how they behave, what they’re doing or attempting to do and what outcomes they create. You gain the ability to make decisions that reflect your business interests rather than someone else’s model assumptions.

The old framework for managing automated traffic was binary: Is this a bot or not? That question no longer holds. The new one is broader: Does this interaction serve my business or work against it? That’s not just a security question. A training crawler scraping your product catalog isn’t committing fraud. It’s behaving exactly as designed. But a publisher might reasonably decide that exchanging content for zero referral traffic isn’t a trade worth making. A retailer might welcome the same crawler because broader AI training means their products surface in more agent-driven recommendations.

The decisions that matter now

Across all interactions analyzed in Human’s report, only half a percentage point separates rates of benign automation from malicious automation. But even within that benign traffic, the business implications vary enormously. A real-time scraper feeding an AI search product might drive citation traffic back to you or it might summarize your reporting so thoroughly that nobody clicks through. The difference matters, and you can’t act on it without seeing it clearly.

Those are the kinds of decisions organizations should make right now: which crawlers to block and which to allow, which agents to welcome and which to watch, where the line sits between competitive intelligence being gathered against you and new demand being routed to you. Today, most organizations lack the visibility to make those calls with any confidence. The ones who build it will have the advantage that matters most: the ability to act deliberately while everyone else is still guessing.

This op-ed represents the views and opinions of the author and not of The Current, a division of The Trade Desk, or The Trade Desk. The appearance of the op-ed on The Current does not constitute an endorsement by The Current or The Trade Desk.